Most enterprise applications work perfectly well under normal conditions. The problems start when they don’t.

A retail platform handles steady traffic without issue until a flash sale begins. A financial services application processes thousands of transactions smoothly until quarter-end reporting hits. A healthcare system manages patient data reliably until a public health event drives sudden demand. These are the moments when performance engineering matters, and they’re the moments that separate well-architected systems from expensive failures.

For C-suite leaders, peak load scenarios represent both operational risk and reputation risk. When systems fail during critical business events, the costs extend far beyond technical recovery. Customer trust erodes, revenue opportunities disappear, and regulatory scrutiny increases. Yet many enterprises approach performance engineering as an afterthought, addressing it late in delivery cycles or only after problems emerge in production.

Why Peak Load Design Fails in Most Enterprises

The challenge isn’t a lack of awareness. Most technology teams understand that applications need to handle peak loads. The problem is execution.

In large enterprises, performance engineering often becomes a checkbox activity. Teams run load tests before launch, identify some bottlenecks, make surface-level optimizations, and declare the work complete. This approach misses the fundamental point. Performance engineering isn’t about testing an application after it’s built. It’s about designing the application architecture, data flows, and infrastructure patterns with peak scenarios as core requirements from the beginning.

Three factors consistently undermine performance engineering in enterprise environments.

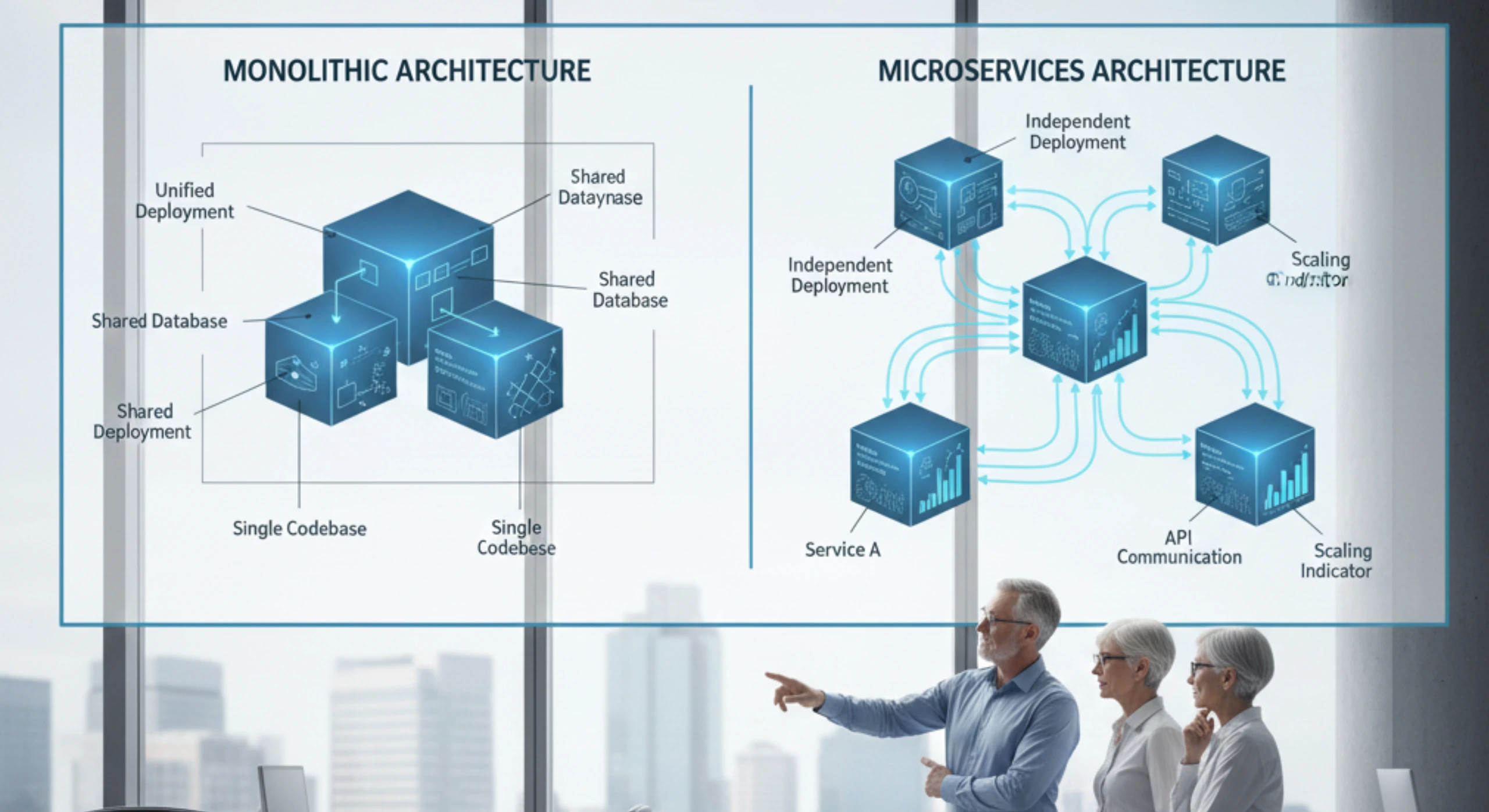

First, development teams design for average load because that’s what they experience during development and testing. When architects draw system diagrams or developers build features, they’re thinking about normal business operations. Peak scenarios feel like edge cases, even when they’re predictable and recurring. This mindset shapes every technical decision, from database design to caching strategies to API patterns.

Second, enterprise applications accumulate complexity over time. A system that performed well at launch degrades as new features get added, integrations multiply, and data volumes grow. Each addition seems minor in isolation, but the cumulative effect creates performance problems that only surface under load. By the time peak scenarios expose these issues, the underlying architecture is too entrenched to change easily.

Third, organizational structures work against effective performance engineering. In large enterprises, different teams own different layers of the technology stack. Infrastructure teams manage servers and networks. Database teams control data architecture. Application teams build features. Security teams enforce policies. When performance problems occur, the root cause often crosses these boundaries. Fixing it requires coordination that enterprise governance processes make difficult and slow.

What Changes When You Design for Peak Load

Effective performance engineering starts with a different design philosophy. Instead of building for typical conditions and hoping the system can scale when needed, you architect for peak load from the beginning and optimize down for efficiency during normal operations.

This inversion changes everything. Database schemas get designed with read-heavy peak patterns in mind, not just transactional efficiency. API endpoints get built with rate limiting and graceful degradation as core features, not additions. Caching strategies become architectural decisions, not performance fixes. Infrastructure provisioning includes explicit capacity for peak scenarios, not just average utilization targets.

The technical patterns that emerge from this approach are well understood but rarely implemented consistently. Asynchronous processing moves work out of user-facing request paths. Event-driven architectures decouple components so load spikes in one area don’t cascade across the system. Database read replicas and caching layers protect core data stores from query storms. Circuit breakers and bulkheads contain failures when individual components reach their limits.

What makes these patterns difficult in enterprise environments isn’t technical complexity. It’s the organizational change required to implement them consistently across large application portfolios. Teams need clear ownership of performance outcomes, not just feature delivery. Architecture reviews need to enforce performance patterns before code gets written, not after problems emerge. Testing environments need to simulate realistic peak loads, not sanitized datasets that fit on developer laptops.

The Execution Challenge

Even when enterprises commit to proper performance engineering, execution frequently breaks down. The work requires specialized skills that most development teams lack. Performance engineers who can analyze system behavior under load, identify bottlenecks across complex distributed systems, and design effective solutions are rare. Enterprises end up with a small performance team trying to support dozens of application delivery efforts, creating bottlenecks and delays.

Tooling adds another layer of complexity. Load testing frameworks, application performance monitoring systems, distributed tracing platforms, and capacity planning tools each require expertise to use effectively. Integration across these tools is often manual and fragile. Getting meaningful insights from raw performance data requires experience that most teams don’t have.

Timing makes the problem worse. Performance issues typically surface late in delivery cycles, when applications are nearly ready for production. At this point, addressing fundamental architecture problems means significant rework and schedule delays. The pressure to launch on time leads to compromises. Teams apply tactical fixes that address immediate symptoms but leave underlying weaknesses in place. The technical debt accumulates, and the next peak load event exposes new problems.

How Ozrit Approaches Enterprise Performance Engineering

Ozrit’s delivery model addresses these execution challenges directly through structured engagement and senior technical involvement from the start.

When Ozrit begins a performance engineering engagement, the approach isn’t about deploying large offshore teams to run load tests. A senior performance architect with 15-plus years of enterprise experience joins the client team within the first week. This person has designed systems for organizations running billions of transactions annually and knows what architectural patterns work at scale. They review the existing architecture, understand the business’s peak load scenarios, and identify gaps before development work begins.

This early involvement changes the trajectory of the entire program. Instead of discovering performance problems late, the team designs solutions with peak scenarios as first-class requirements. Database schemas, API contracts, caching strategies, and infrastructure patterns all get reviewed against realistic load projections. Problems that would have required extensive rework later get addressed while they’re still easy and inexpensive to fix.

Ozrit brings structured capacity to support this work. The company maintains a core team of approximately 800 engineers, including specialists in distributed systems, database optimization, cloud architecture, and application performance monitoring. When a client engagement requires specific expertise, those resources onboard quickly because they’ve worked on similar programs before. A database specialist who optimized query performance for a financial services client can apply that knowledge to a retail platform within days, not months.

The onboarding process reflects this experience. Ozrit doesn’t require clients to spend weeks writing detailed specifications or creating extensive documentation. The engagement starts with focused working sessions where senior Ozrit architects understand the business context, review existing systems, and identify the critical paths that need performance engineering attention. Within two to three weeks, the team is delivering value through architecture reviews, load testing strategies, and optimization recommendations.

Delivery follows a clear cadence. Initial performance assessments typically complete within four to six weeks, providing executives with a clear view of risks and required investments. Architecture improvements and implementation work runs in structured phases with defined milestones and measurable outcomes. Clients know what’s being delivered, when it will be ready, and what it will cost.

Support continues after launch. Ozrit provides 24/7 monitoring and response for production applications, ensuring that when peak load events occur, experienced engineers are available to respond immediately. This isn’t a helpdesk answering tickets. It’s performance specialists who understand the system architecture and can diagnose and resolve issues in real time.

Making Performance Engineering Sustainable

The goal isn’t just to fix performance problems in one application. For most enterprises, the real value comes from building internal capability that improves performance across the entire application portfolio.

Ozrit structures engagements to transfer knowledge systematically. Client teams work directly with Ozrit architects throughout the engagement, learning the analysis techniques, design patterns, and optimization strategies that apply across different applications. This happens through working sessions, architecture reviews, and paired problem-solving, not through training courses or documentation.

The benefit extends beyond individual team members. When client architects see how performance engineering integrates into architecture decisions, governance processes, and delivery practices, they can replicate that approach on other programs. The patterns and standards that emerge from one engagement become templates for future work.

This approach recognizes a reality about enterprise technology organizations. Sustainable improvement comes from changing how teams work, not just from fixing individual systems. Performance engineering needs to become part of how the enterprise designs, builds, and operates applications, embedded in architecture standards, delivery processes, and team capabilities.

The Leadership Perspective

For executives overseeing large technology portfolios, performance engineering often feels like a technical problem that should stay with the technology teams. The reality is different. How an enterprise approaches performance engineering reflects deeper questions about risk management, delivery capability, and operational maturity.

Organizations that design for peak load from the beginning deliver more reliably, respond to business opportunities faster, and carry less technical debt. They avoid the costs and reputation damage that come from high-profile system failures during critical business events. Just as importantly, they build technology teams that understand how to deliver complex systems at scale, a capability that compounds in value over time.

The question isn’t whether to invest in performance engineering. The question is whether to address it systematically as part of how the enterprise builds technology, or reactively when problems force attention. That choice shapes not just individual application outcomes, but the overall capability and credibility of the technology organization.